When Steel Plants Run Smooth…

and Still Lose Millions

How prescriptive maintenance and Condition Monitoring Catch the Process Faults That DCS Alarms Miss

Read Time: 8–9 minutes | Author – Kalyan Meduri

Steel plants rarely fail loudly. They fail quietly. The tap happens on time. The cast continues. The rolls turn. And yet — energy consumption creeps up, emissions intensity worsens, refractory life collapses early, electrodes burn faster, operators compensate manually, and the cost is discovered weeks later on the power bill, fuel ledger, or sustainability report. This is the hidden reality of process-induced faults in steel manufacturing: faults born not out of breakdown, but out of operating drift. faults born not out of breakdown, but out of operating drift. Closing that gap requires prescriptive maintenance that goes beyond alarms — and condition monitoring that reads the signals conventional systems never correlate.

Blast Furnace

The fault that hides in plain sight

- Coke degradation altering permeability

- Uneven burden descent

- Minor cooling-water deterioration at specific tuyeres

- Drift in oxygen enrichment or PCI injection patterns

10–30 kg/thm

Fuel rate increase — without any furnace instability alarm

$0.8–1.2 M

Production loss per tuyere burnout emergency

How PlantOS™ intervenes

PlantOS™ monitors correlated patterns, not single tags:

• Spatial temperature deviations across tuyere cooling circuits

• Raceways behaving differently despite ‘normal’ absolute values

• Cooling delta and gas flow patterns that do not match expected furnace physics

Instead of waiting for a threshold breach, PlantOS™ flags emerging imbalance clusters, prescribes targeted operating corrections (air distribution review, charging pattern adjustment, tuyere inspection), and allows operators to intervene before fuel rate inflation hardens into cost.

Electric Arc Furnace (EAF)

Power losses that don’t trigger trips — but blow up costs

The fault no KPI captures

- Suboptimal electrode regulation

- Poor foamy slag consistency

- Scrap mix variability and uneven meltdown

- Transformer tap and reactance mismatch

3-7%

Increase in kWh/t from arc instability

30 GWh/yr

Excess electricity in a 1 Mtpa EAF shop at 30 kWh/t drift

In 1 Mtpa EAF shop, even a 30 kWh/t drift equals 30 GWh of excess electricity per year, with no classical alarm to expose it.

How PlantOS™ intervenes

PlantOS™ correlates:

• Electrode movement behavior

• Current and voltage waveform variance

• Oxygen and carbon injection patterns

• Oxygen and carbon injection patterns

It identifies non-obvious instability signatures — situations where absolute values are acceptable, but relationships are wrong — then prescribes arc-length corrections, slag chemistry stabilization actions, and power program optimization phases. These corrections restore energy coupling without slowing the heat delivering measurable AI for outcomes your energy manager can report.

Reheating Furnaces

How safety margins turn into permanent fuel loss

The fault masked as a ‘safety margin’

60–70%

Of a rolling mill’s total thermal load consumed by reheating furnaces

12–15%

Fuel reduction achievable with correlated combustion optimization

How PlantOS™ intervenes

PlantOS™ overlays:

• Zone-wise thermal profiles

• Walking beam kinematics

• Burner efficiency behavior

• Historical slab temperature trajectories

It detects systemic over-firing patterns - not momentary spikes - and recommends fine-grained combustion corrections that operators validate heat by heat. Plants using such correlation approaches routinely achieve 12–15% fuel reduction without risking rolling quality.

Rolling Mills

Throughput looks fine — energy intensity worsens

The fault no one alarms for

- Roll wear increases friction

- Hydraulic imbalance raises resistance

- Descaler inefficiency raises load

- Misalignment causes motors to draw excess current

10–15%

Increase in energy per ton rolled from accumulated mechanical drift — with no effect on tonnage or schedule

KPIs stay green. Energy intensity silently worsens. This is exactly the blind spot that AI predictive maintenance and continuous asset monitoring are designed to close catching drift before it becomes cost.

How PlantOS™ intervenes

PlantOS™ correlates:

• Motor current vs rolling force

• Hydraulic pressures vs strip dimensions

• Descaling performance vs surface drag

Instead of generic ‘high load’ alerts, it surfaces force–energy mismatches, guiding maintenance and process teams to correct the true cause — not the symptom.

Utilities

Compressed air & fans — the permanent energy leak plant heads underestimate most

Utilities consume a substantial share of a steel plant’s total electricity. Compressed air leaks alone waste a significant proportion of generated air in poorly monitored systems. The challenge is not awareness — it is attribution. Which compressor? Which distribution leg? Which demand is artificial vs productive?

20–30%

Of a steel plant’s total electricity consumed by utilities

25–40%

Of generated compressed air wasted in poorly monitored systems

How PlantOS™ intervenes

PlantOS™ fingerprints:

• Compressor load patterns

• Pressure–flow relationships

• Usage behavior tied to production states

It exposes structural waste, not random leaks — allowing operators to attack the biggest energy drains first.

Why DCS Alarms and Energy Reports Miss What Actually Matters, Why Traditional Monitoring Fails — and PlantOS™ Succeeds

Steel plants already have data. What they lack is contextual intelligence. DCS alarms monitor thresholds. Energy reports aggregate results. Maintenance systems log failures. PlantOS™ works between them.

It detects anomalies early (before thresholds), Correlates across process + energy + equipment, Prescribes operator-validated actions, Builds a living memory of “what worked where”. This approach aligns with proven industrial AI research showing that self-supervised, plant-scale anomaly detection dramatically outperforms static alarms in steel environments.

The Gap That Remains — and Where It Lives 01

Which process deviation, interacting with which emerging asset condition, is inflating energy per ton of steel right now — and by how much?

Closing this gap requires more than dashboards or alarms. It requires continuous correlation between process behavior, energy intensity, and asset health — followed by prescriptive actions that operators can validate and trust. This is precisely where Infinite Uptime’s Process Business, built on PlantOS™, operates. PlantOS™ identifies energy-relevant anomalies in steel operations while they are still recoverable:

- Blast furnace gas-flow or tuyere imbalance before rising fuel rate hardens into sustained cost

- EAF arc-stability degradation before kWh per ton increases compound across heats

- Reheating furnace over-firing masked as safety margin while fuel efficiency can still be restored

- Rolling-mill force–energy mismatches that never trip alarms because throughput never drops

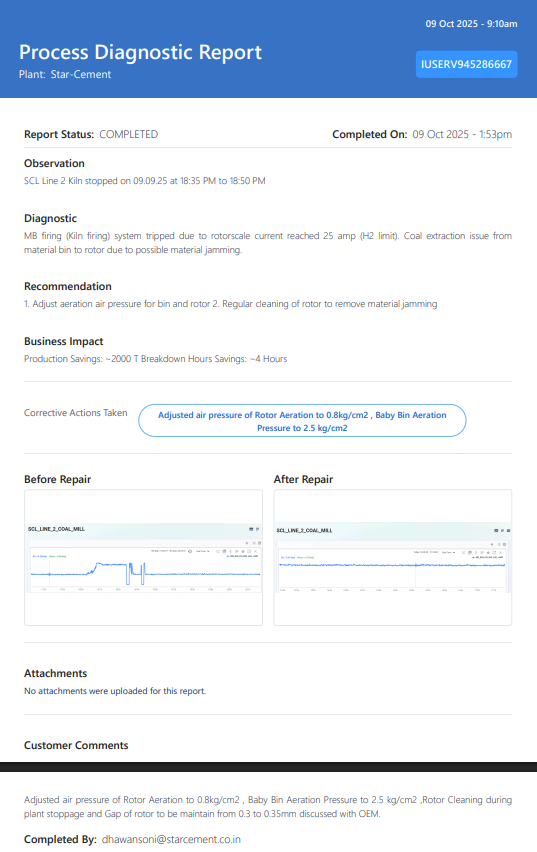

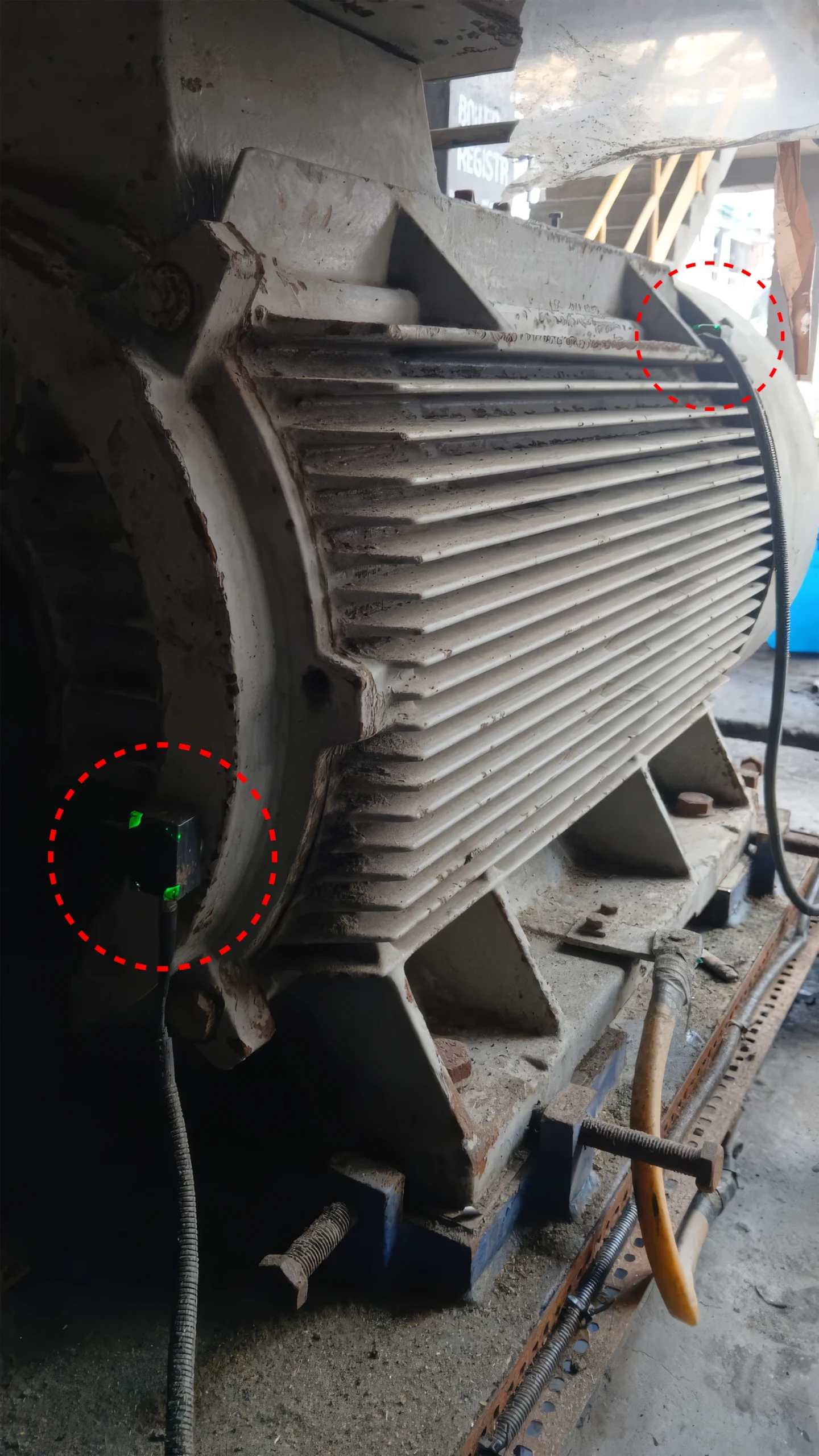

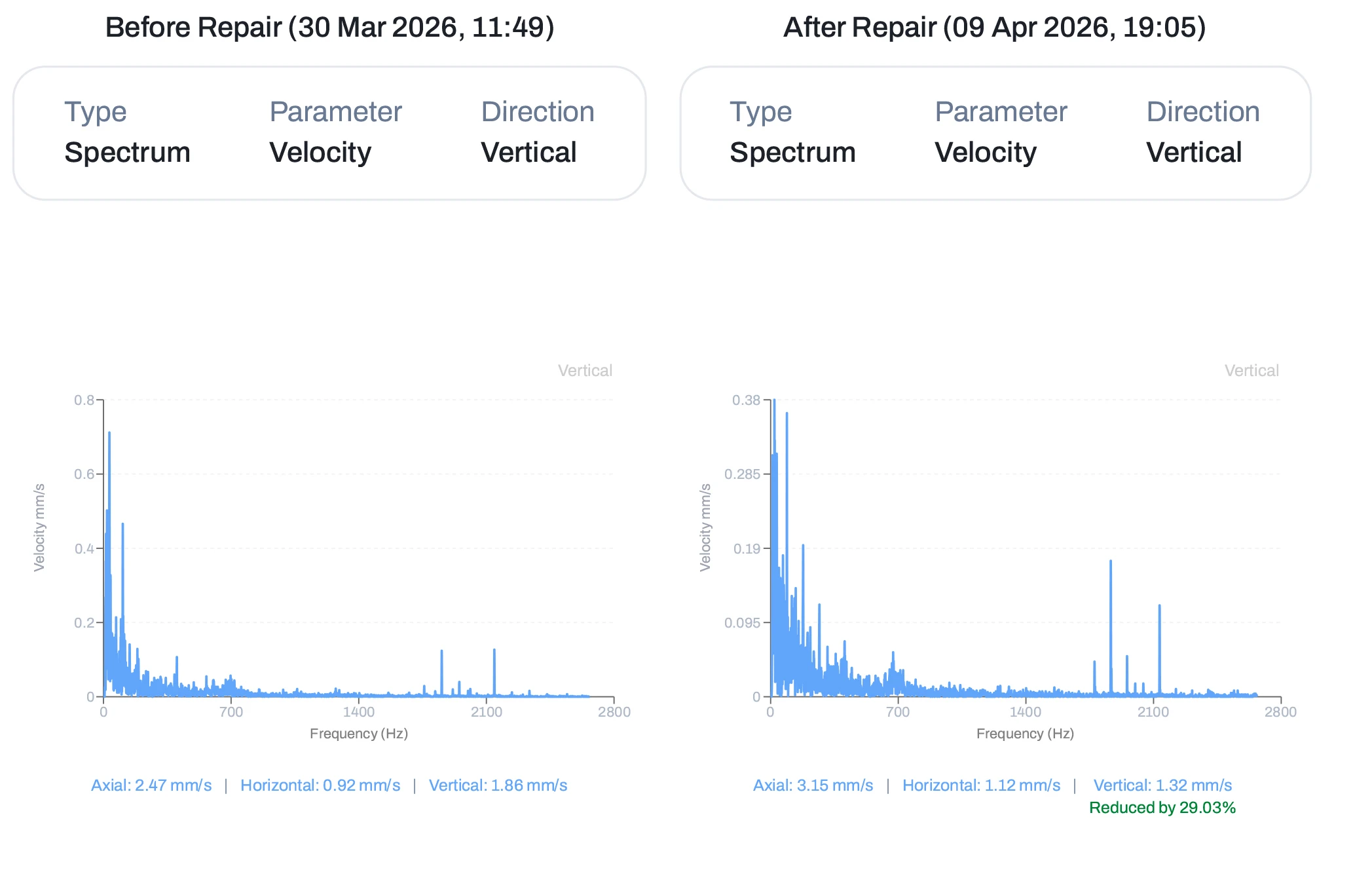

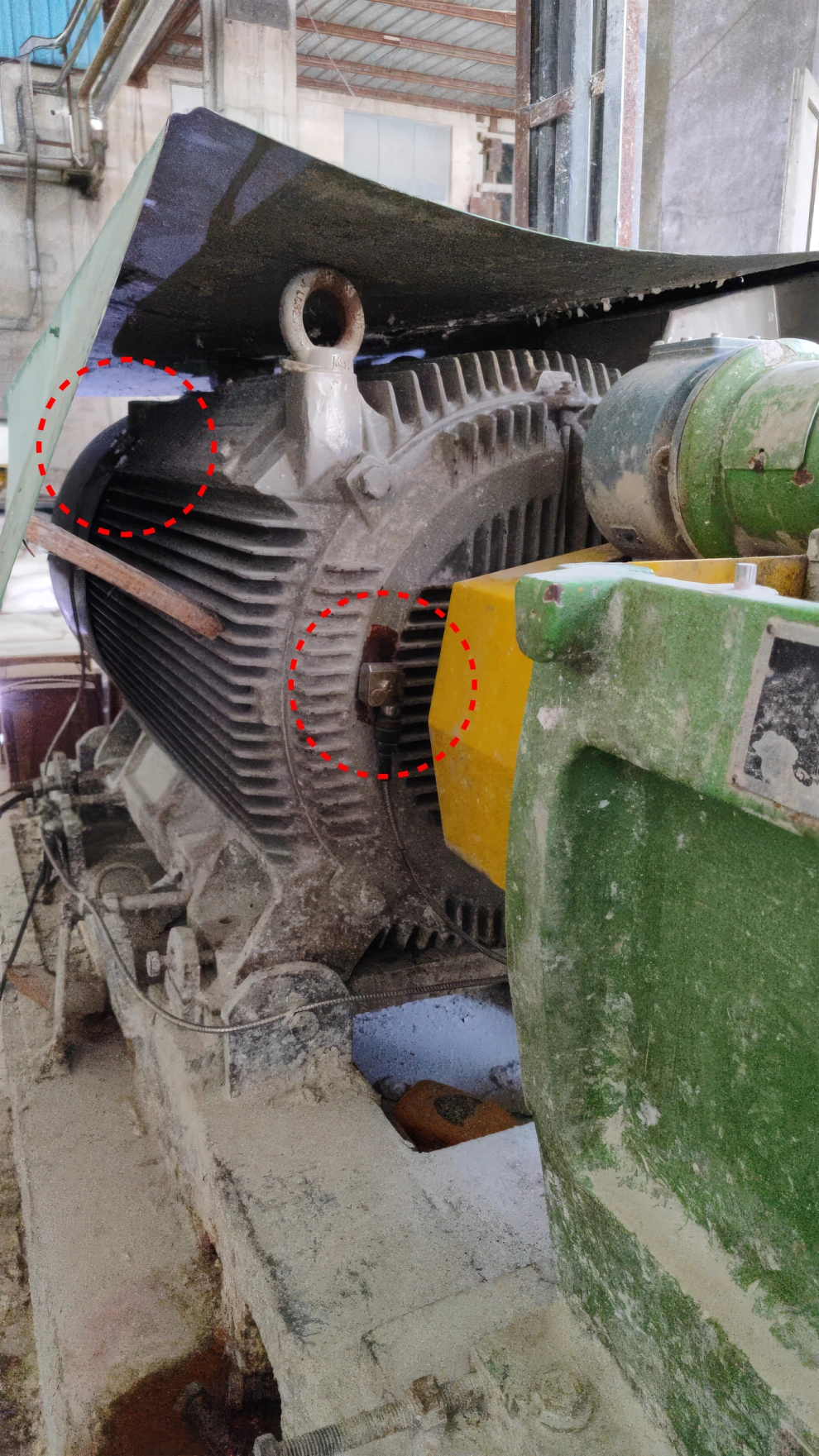

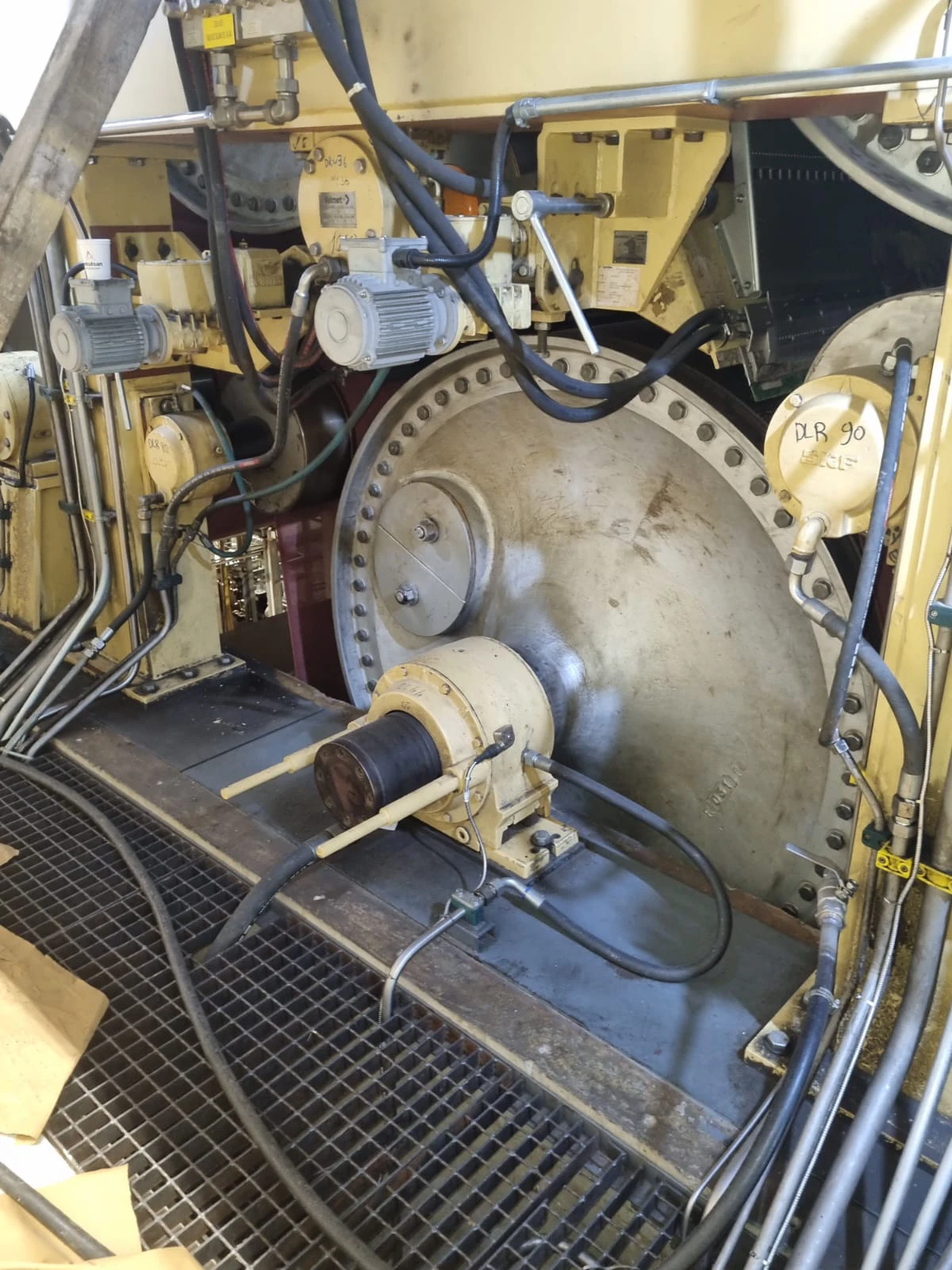

In the Field: What Operator-Validated Outcomes Look LikeID Fans02

Clear Business Impact stated prevention of accelerated oil degradation, excess chiller energy use (~226 kWh/event), equipment thermal stress, and improves MTBF while avoiding repeat energy and maintenance costs.

This is not just a case study prepared for a presentation. This is Process Prescription Report generated by PlantOS™, acted & validated by plant operators, and time-stamped to the hour. The observation, diagnostic, recommendation, corrective action, and business impact are all in one record — auditable, explainable, and Reporting ready.

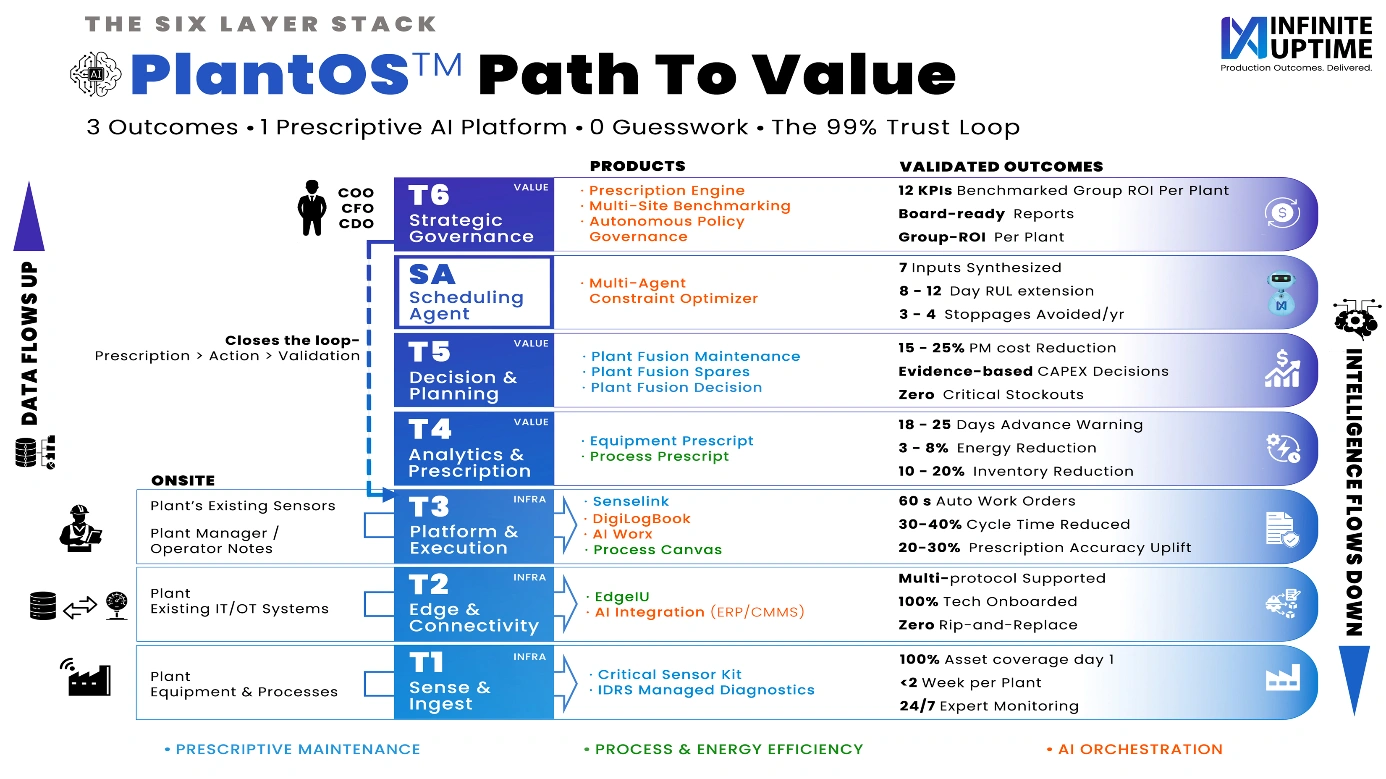

The architecture above shows how PlantOS™ moves from raw plant data at the equipment and process layer (T1) through edge connectivity, platform execution, and prescriptive analytics (T2–T4), to decision planning, AI orchestration, and strategic governance (T5–T6). For cement energy management, the critical path runs through Process Canvas at T3 — which makes energy drift visible and explainable — and Process Prescript at T4, which converts correlated anomalies into timed operator actions. The 3–8% energy reduction validated at T4 is not a modelled estimate. It is an outcome confirmed by operators who acted on prescriptions, closed the loop, and logged the result.

This is what positions PlantOS™ as Industrial Agentic AI for Operator-Validated Outcomes — not a monitoring layer, but a decision layer that closes the loop between data, human judgment, and measurable impact.

Process-induced faults inflate energy, carbon, and cost while production looks stable. Infinite Uptime’s PlantOS™ closes that blind spot – not by replacing operators, but by giving them actionable, trusted insight exactly where conventional systems go silent. That is how energy is saved. That is how carbon is controlled.

From Predictive Maintenance to Prescriptive Maintenance: What the Difference Means on the Plant Floor

Predictive maintenance tells you a bearing will fail in 14 days. Prescriptive maintenance tells you exactly which bearing, which lubrication procedure, and which shift to do it in — before the failure window opens. The gap between these two is where millions are lost in steel plants. AI predictive maintenance systems flag the risk. Prescriptive AI closes it. PlantOS is built on the second — continuous condition monitoring and asset monitoring that generates operator-validated actions, not just alerts. That is AI for outcomes.

Infinite Uptime’s PlantOS™ helps Steel manufacturing plants identify and correct energy-relevant process anomalies in real time — before they become carbon costs. To explore what this looks like for your operations

Get in touch here →Frequently Asked Questions

Process-induced faults are deviations caused by operating conditions (load, control settings, system interactions) rather than mechanical failure. These faults often do not trigger alarms but lead to higher energy use, instability, and hidden cost losses.

They do not:

- Correlate data across systems

- Detect subtle deviations before limits are breached

- Identify relationships between process, energy, and asset health

- Predictive maintenance: Tells you what might fail and when

- Prescriptive maintenance: Tells you:

- What exactly to fix

- Why it is happening

- What action to take

- When to act

- Process behavior

- Energy consumption

- Equipment signals

- Granular, asset-level energy data

- Traceable operational records

It is an outcome of better operational decisions, including:

- Optimized process control

- Reduced drift

- Corrected inefficiencies

- Detect failures

- Trigger alarms

- Detects early deviations

- Explains root cause

- Prescribes exact actions

- Tracks outcomes

References:

- The Operation of Contemporary Blast Furnaces | Springer Nature Link

- Causes and treatment measures for abnormal blast furnace conditions

- Blast Furnace Tuyere Maintenance: Inspection, Replacement & Failure Prevention Guide

- Arc Stability and Power Input in the EAF | LinkedIn

- Energy Loss Analysis in Steel Manufacturing

- Reheat Furnace Analytics: Burner, Refractory & Walking Beam System Guide

- Reduce Consumption of Rolling Mill Reheating Furnace

- ijaerv12n23_48.pdf

- Improving Compressed Air System Performance: A Sourcebook for Industry

- Handsfree, Fully Autonomous Anomaly Detection AI